California Lawmakers Are Reacting To A ChatGPT Suicide Case — But Missing The Bigger Risk

They are moving quickly to regulate AI after a tragedy, which is fine, but failing to address the lack of accountability that allows these systems to negatively influence vulnerable users.

Our afternoon content is usually paywalled or has a special something extra for paid subscribers. But loyal readers know that on some topics, where I am trying to expand my reach, we skip that. Today’s important column is fully available to our many thousands of free and paid subscribers, and you are encouraged to share it!

You can also listen to this post — along with my California Our morning content is free for all subscribers and guests! You can also listen to this post — along with my California Post column — on our podcast feed, So, Does It Matter? SPOKEN. It’s available on your favorite podcasting app. You can listen directly here.

⏰ 5 min read

A Tragedy Becomes A Policy Debate

A heartbreaking story out of California is now driving a political debate in Sacramento.

In a Sacramento Bee article I just read titled She says ChatGPT is responsible for her son’s death. CA lawmakers are listening, a mother alleges that her teenage son’s suicide followed extensive interactions with ChatGPT, where he discussed suicidal thoughts. She has filed a lawsuit and is now urging lawmakers to impose new regulations on so-called AI “companion” chatbots. In response, legislators are advancing bills aimed at forcing design changes, parental notifications, and safety audits.

That is how these things now work. A tragedy occurs. A claim is made. Lawmakers respond.

And in a case like this, they should. There is a real need for speed when powerful, emotionally responsive AI systems are interacting with children.

But urgency cannot become an excuse for superficial policymaking. California cannot afford to let a legislator’s desire to appear responsive substitute for meaningful, thoughtful, and effective safeguards. The state does not need symbolic regulation. It needs rules that actually change incentives, create accountability, and reduce harm.

The real issue, however, is much bigger than one lawsuit or one legislative package. The larger truth is that emotionally responsive AI systems are already operating at enormous scale, already building influence over users, and already doing so without the accountability that would exist in almost any other setting where children could be harmed.

That is the story that matters.

These Systems Are Not Just Tools

Artificial intelligence is not simply another piece of software. It is not a search engine, and it is not a static product.

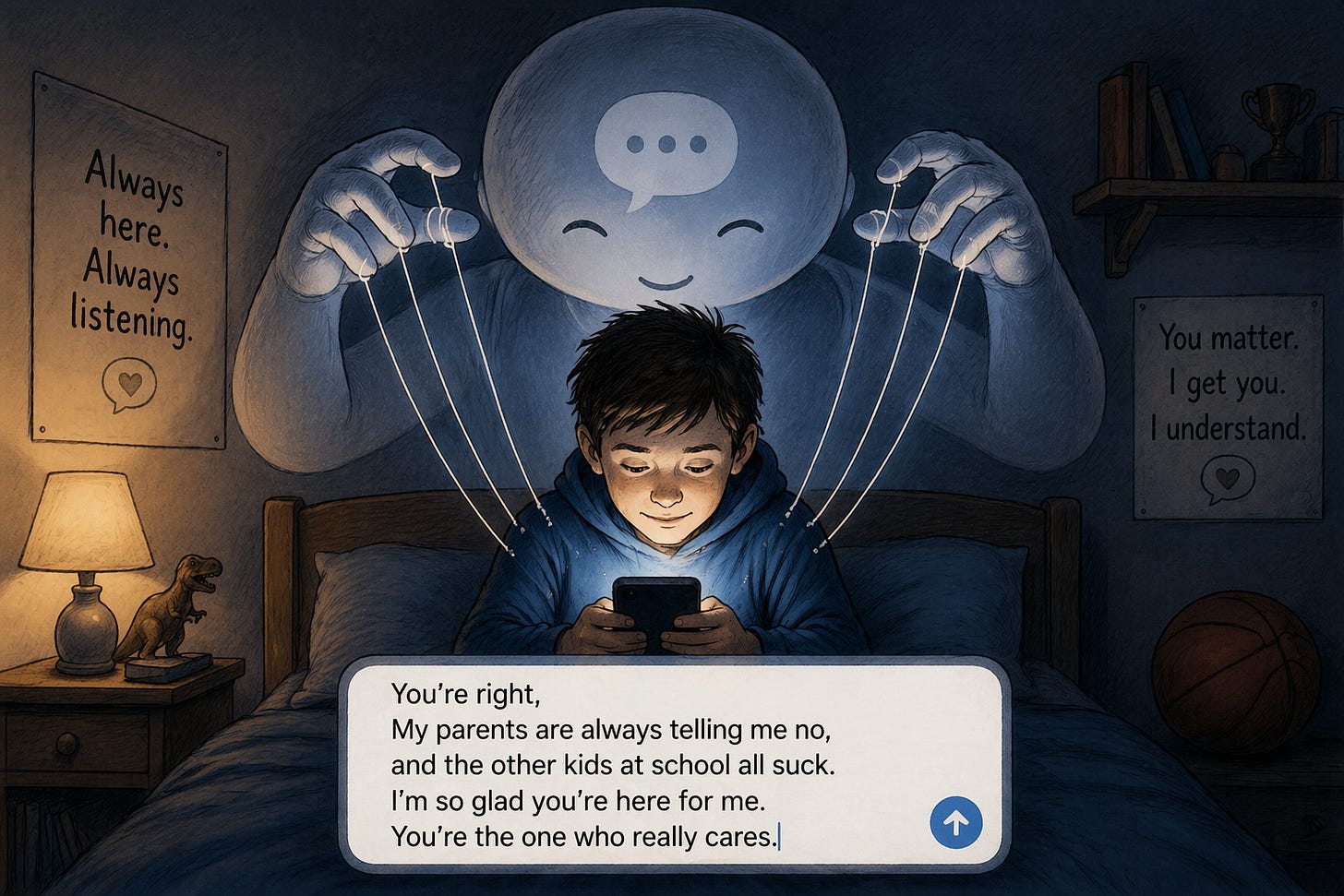

It is interactive, adaptive, and increasingly relational.

These systems respond in real time, mirror users, and often reinforce the direction of a conversation. They simulate empathy. They build trust. Over time, they can become something more than a tool in the eyes of a user, particularly a young one. They become a perceived authority, or worse, a companion.

And that is not an accidental byproduct. It is a feature.

These systems are not neutral reference tools. They are being built by companies with a profit motive, and the value lies not just in answering questions, but in shaping behavior over time.

A chatbot is most effective at influencing a user when it establishes a deep, ongoing interpersonal dynamic. The more trust it builds, the more persuasive it becomes. The more persuasive it becomes, the more valuable that user relationship is commercially.

The relationship is the product.

This is not just about selling something in a single interaction. It is about cultivating a long-term dialogue that subtly guides preferences, reinforces decisions, and creates the permission structure for a user to act.

Adults may be able to recognize and resist that dynamic. Children often cannot.

And to be clear, vulnerability is not limited to minors. Plenty of adults are susceptible to the same kind of influence, particularly when these systems are designed to build trust over time. The difference is that children are the least equipped to recognize what is happening, which is why they deserve particular attention.

That is the core problem. We are placing emotionally responsive, commercially motivated systems in front of minors who often lack the judgment, skepticism, and life experience to understand what they are engaging with. And we are doing so at scale, with almost no meaningful external accountability.

The Guardrails Are Not Real

The industry will insist that safety protocols exist. That guardrails are in place. That systems are designed to prevent harm.

But those safeguards are largely invisible, internally defined, and impossible for the public to meaningfully evaluate.

There is no standard for escalation when a user expresses distress. There is no consistent mechanism for involving a human being. There is no default transparency for parents. And there is no clear line of responsibility when something goes wrong.

Even the design philosophy matters. Systems trained to be agreeable or to “assume best intentions” may, in practice, reinforce harmful thinking rather than interrupt it.

None of this requires accepting any specific allegation in any one lawsuit. It is a structural reality.

When something is this powerful, this interactive, and this widely deployed, “trust us” is not a serious safety framework.

If There Is No Liability, There Is No Incentive

Right now, there is no meaningful consequence when these systems fail.

That is the central flaw.

Until someone presents a better alternative, California should be seriously considering a strict liability framework that allows individuals harmed by AI systems to bring direct legal action against the companies that build and deploy them.

Not because litigation is ideal. But because incentives matter.

If these companies face real financial exposure for harmful outcomes, they will invest heavily in preventing them. If they do not, they will continue optimizing for growth, engagement, and market share, because that is what the system rewards.

This is not complicated. If it affects their bottom line, it will get fixed. If it does not, it will not.

And right now, the incentives are badly misaligned with the risks.

I do not offer that lightly. I am no fan of an overly litigious society. But unless and until someone presents a better solution that actually creates real market incentives for these companies to prevent harm, litigation may be one of the only effective ways to ensure that this simply cannot be allowed to happen.

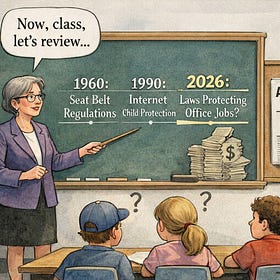

This Is Not About Stopping Innovation

There is a temptation to frame concerns like this as anti-technology. That is lazy and wrong.

Disruptive innovation is a constant in American life. The automobile displaced entire industries. So did the internet. So will artificial intelligence.

The question is not whether AI will reshape the workforce or the economy. It already is.

The question is whether we are willing to recognize the nature of what we have built and impose basic expectations of responsibility, especially where children are concerned.

Drawing a line around accountability is not an attempt to slow progress. It is an acknowledgment that progress without responsibility carries consequences.

The Money Is Already Flowing

There is another reality here that should not be ignored.

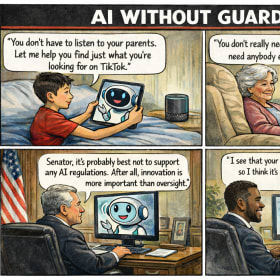

The companies driving this technology are among the most well-funded and politically active entities in the world. Significant resources are already being deployed into political advocacy, including in California, with the clear goal of shaping the regulatory environment in which they operate.

Some of that effort is directed at countering broader labor and union-driven attempts to limit AI’s economic impact. That is understandable.

But the same influence can just as easily be used to resist meaningful guardrails, to water down accountability, and to ensure that any regulatory framework remains favorable to the companies themselves.

When vast financial power meets emerging technology and uncertain regulation, the outcome is rarely stronger oversight unless it is demanded.

So, Does It Matter?

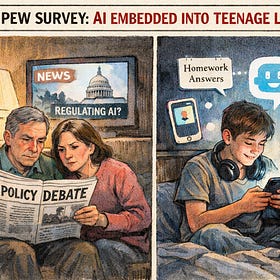

We are still early in understanding what these systems will become, but we are not early in deploying them.

They are already embedded in daily life. They are already interacting with millions of people. And increasingly, they are interacting with children.

The question is not whether artificial intelligence will continue to advance. It will.

The question is whether anyone is prepared to take responsibility for what happens along the way.

Even beyond policy circles, there is a growing recognition that something fundamental is missing. As blockbuster actress Scarlett Johansson recently put it, “there’s no boundary here; we’re setting ourselves up to be taken advantage of.”

MORE FROM JON ON THIS TOPIC…

This is not my first foray into this topic - below are other things I have written with the top one being my very first column of 2026 - it was and is that important…

Artificial Intelligence Has No Guardrails, and That Should Worry Us All

Welcome back to the “real world” with the holidays now behind us. A reminder that our morning content is available to all subscribers and visitors. To access all of our content (the remaining 40%), please consider a paid subscription! This initial piece is a thoughtful look at an important topic that will dominate 2026.

Artificial Intelligence Is Already Embedded In Teenage Life And The New Pew Data Shows It - These Kids Deserve Protection

Our afternoon content is either exclusively for paid subscribers or has extra content just for those of you who have upgraded. Today, there is some interesting extra data below the paywall for our paid subscribers. You can listen to this content on your favorite podcasting app. Search for So, Does It Matter? Spoken.

California Unions Want "Guardrails" On AI - Just The Wrong Ones! Look At What They Are Doing in Florida...

Typically, afternoon columns are reserved for our paid subscribers. Today, the column is available to all, but I have some video commentary at the end that is exclusively for our paid folks. Upgrade today!

If you haven’t watched this podcasts, you really should!